I’ve been working in the coagulated worlds of automation and AI for a number of years now. During that time, my colleagues and I have delivered a number of exciting, and some not so exciting projects — but all of which, we like to think, have made fundamental changes to our clients’ organisational makeup. Yet when I speak with senior people in business, many of whom are visionaries in their chosen fields, I’m often faced with a particular strain of narrow thinking when it comes to automation strategy — and the reasoning behind it gives me cause for concern.

Why do we keep kicking the can down the road?

To overcome adoption failure, the real challenge at hand is overcoming the harsh limitations of linear automation. So why are so many businesses thinking in the short term, and merely moving the cost of automation further down the line?

Over the past few years, and previously in many less formally recognised ways, we’ve been using linear automation systems to do the work formerly assigned to humans. Most recently, this has been formalised as Robotic Process Automation (RPA) and has had a profound impact on the businesses it’s been implemented within. We’ve seen these systems driving the checks and balances in banking, automating background and anti-money laundering searches, and streamlining the way in which we welcome new clients. We’ve seen it helping people pluck the right products from a wide choice on offer, or even offering loans or credit for the masses who qualify — all in a lean, straightforward fashion. But, as per the segmentary principles of Taylorism, we’ve found quite quickly that when we need a system to do more than, say, carry iron from one place to another in a straight line, we fall back on humans to look at the anomalies.

A familiar riposte here is to say we should be looking at AI (this tends to refer to some form of Machine Learning) to break these linear boundaries of problem solving. True enough, this approach can undoubtedly do a better job of handling outliers, with the ability to pick up information from previous cases and identify or even predict subsequent actions. But we have a way to go before these technologies give us the confidence we need to rely on them for potentially life-defining decisions. Backwards-looking patterns are not always the right sources to mine to be able to support new edge cases; humans will always need to be on hand to review and support learning.

My arguments here about AI or ML are weak, granted, because the technology itself isn’t challenged or struggling with these issues. But the industries that need to accept, adopt, engage with and have the data to feed these technologies are simply not ready yet. Data hygiene in insurance, for example, is low — according to Accenture, 97% of business decisions are made using data that the company’s own managers consider to be of unacceptable quality. That’s not to mention the cultural or skill barriers hampering AI adoption in traditional industries.

So this quandary, and the limitations of RPA, creates the need for support structures or human teams alongside new technology, thus defeating the objective of streamlined efficiency. As a result, early AI adopters have felt the pinch of costs without seeing the utopia of rewards they were promised. For many, the application of AI has become little more than an expensive (and failed) experiment.

Low-hanging fruit vs long-term transformation

This messy challenge is where I find myself on a regular basis. People say to me: “we understand your approach — but we’ll just try RPA first”, or “we’ll use RPA to pick up the low hanging fruit.” Herein lies the difference between automating a set of tasks and transforming the way your business benefits from automation and AI; and it’s the former that will cost a lot more in the long run.

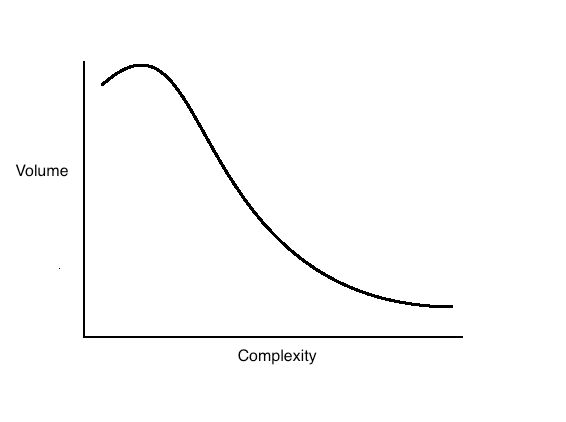

If we construct a visual (see below) of the traditional curve of the processes or tasks organisations carry out, we have a balance between the volume and complexity. This may seem generic in that it can be applied to any sector or scenario, but in essence the theory is simple and sound. The bulk of tasks are relatively simple and repetitive, with a less frequent portion of tasks that are more complex, or at least require a more situational approach. These less regular but more complex elements are where the cost lies for your business. In the long run, this is where businesses that opt for linear automation will lose out, with tools stuck in narrow processes. This is arguably why, according to KPMG, barely more than 1 in 10 enterprises have managed to reach industrialised scale with RPA.

When outliers escalate

The more complex scenarios on the spectrum don’t fit easily into forward chaining decision tools; they may be slightly varied, or different in any number of ways, but essentially they don’t fit the mould of repetitive, bulk tasks. We see this a lot in high-volume call centres, where automated systems struggle to match an issue to a predefined solution due to a slight situational change; a small variation, sure, but more than enough to break the flow. If this occurs across multiple areas of a business, pools of exceptions build up — and they need to be dealt with.

Why is it though that these outliers are so toxic to the efficiencies their creators set out to achieve? Put simply, they don’t have — and likely will never have — a business case for dealing with them alone. These exceptional cases therefore build, become more widespread, and over time become progressively more costly to tackle. Think back to the volume/complexity diagram; what we’re left with is a collection of the ‘long tails’ of complex scenarios that simple, high-volume automation has created, and which can not easily be swept up.

Rules-based, but not linear

If I’m starting to sound like the bearer of bad news, there is a solution to cushion the blow — but it starts with businesses breaking their habit of plumping for short term gain. Rather than jumping at the chance to automate high-volume cases and allowing the more valuable and complex cases to build up in a quagmire, businesses need to start with the complex stuff. This requires using technology that is rules-based, but not bound by a linear approach to supporting organisational efficiency. If we codify how experts carry out the complex or the technical, within that high-value information lies the answers to how to tackle the simple tasks too. By automating the right hand vortex of the volume/complexity chart, we’re able to cover off the ‘volume’ part of the gradient; which is impossible the other way around.

In short, dealing with the situational cases means we’re able to automate the less situational with ease — and preempt any nasty surprises lurking around the corner. Choosing the right technology now reduces the need to deal with the hidden costs to come.